Best Practices

Harnessed Coding Agents: What Minions and Codex Teach About AI Code Review

Feb 22, 2026

Coding agents are no longer just demos that write a patch. The new wave of systems wraps the model in a harness: tool contracts, verification loops, and explicit constraints that make results dependable. That shift changes how AI code review should be designed. The most effective teams treat review as a system with inputs, checks, and quality gates, not a single prompt.

Key Takeaways

Harnessed agents rely on structured tools and verification loops, which is the same pattern AI code review needs to be trustworthy.

One shot coding agents highlight a core truth: planning is not enough, you need a repeatable execution loop and explicit quality gates.

Review quality improves when inputs are standardized, reviews are scored, and high-impact changes are routed through deeper checks.

Independent review catches more defects because it avoids shared blind spots that happen when the same model family writes and reviews the change.

The best review stacks separate context building, review generation, verification, and final summaries so errors are isolated early.

Propel teams operationalize this harness with risk tiers, model routing, and metrics that track usefulness, not just volume.

TL;DR

The trend toward harnessed coding agents shows that AI needs repeatable execution loops, tool contracts, and verification to be reliable. Apply the same idea to AI code review by standardizing inputs, routing by risk, and measuring review usefulness. When review is a system, not a prompt, teams get higher signal and faster merges.

Why harnessed coding agents are suddenly everywhere

The most interesting agent launches in 2026 are not about bigger models. They are about harnesses: layers that constrain how the model plans, calls tools, verifies output, and reports results. Stripe, for example, framed its Minions project as one shot, end to end coding agents that are driven by a tightly controlled execution loop.

The best explanation of the harness mindset came from recent commentary on OpenAI Codex. The point was not just that the model improved. It was that the system around the model makes it possible to iterate, verify, and finish tasks reliably.

Stripe Minions announcement

and

How Codex is being harnessed

What one shot and harnessed really mean

One shot does not mean a model solves everything in a single response. It means the system can take a task description and get to a completed outcome with minimal human intervention. The harness provides the steps that make this possible:

- Inputs are normalized so the model sees consistent context.

- Tool calls are constrained so the model cannot wander.

- Verification is explicit, not implied.

- Outputs are summarized and scored against expectations.

That stack is exactly what AI code review needs. The review output is not useful if it is unreliable, noisy, or missing high risk issues. A review harness gives teams repeatable behavior, which means engineering leaders can trust the results.

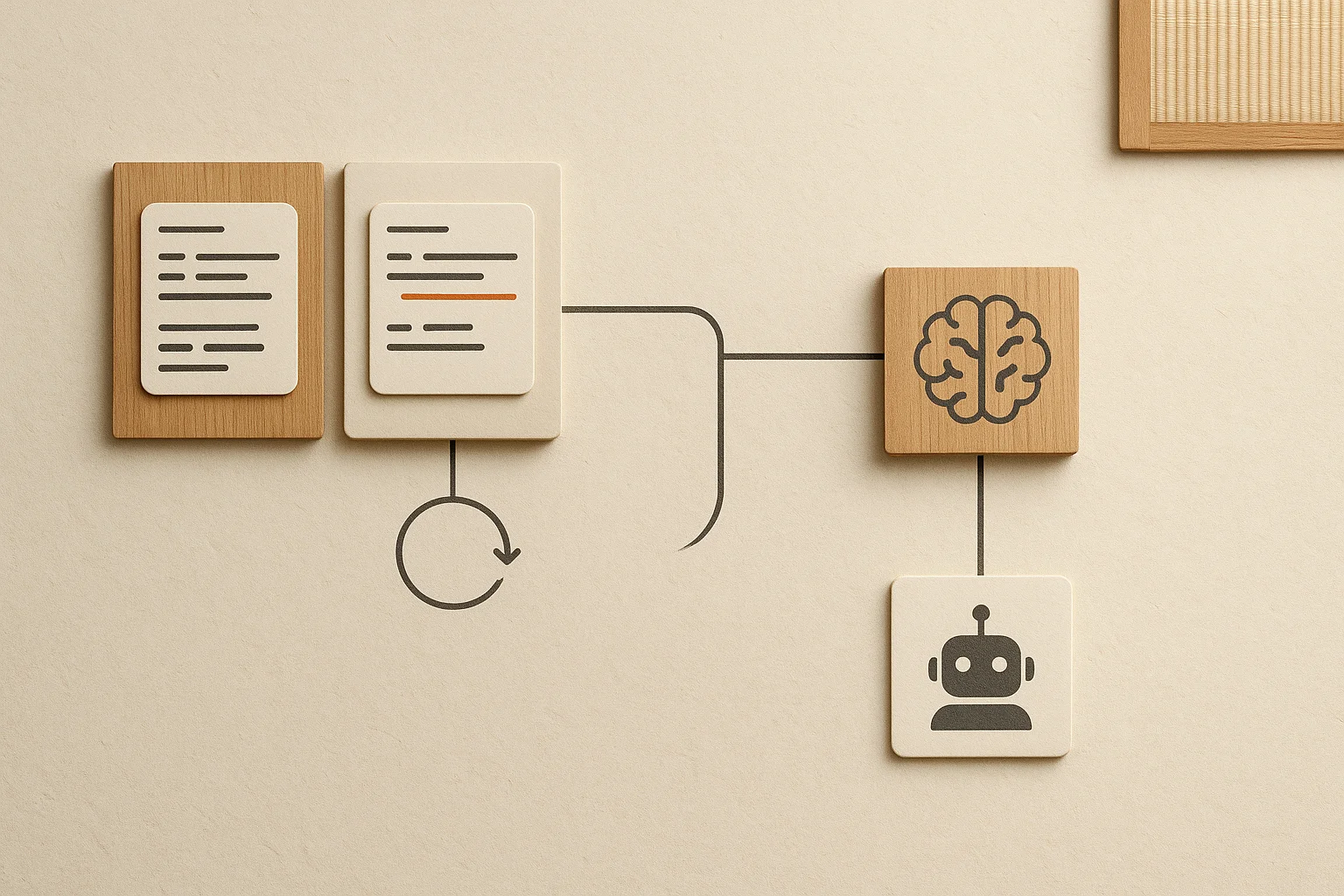

AI code review is a system, not a prompt

Code review already has a harness in human workflows. We use checklists, reviewer gates, and required approval rules to keep changes safe. The GitHub review model is a reminder that structured review rules exist for a reason.

GitHub pull request review rules

The same idea applies to AI review. The model is just one component. The system needs:

- Standard inputs, including diffs, tests, and policy context.

Tool access for repository search, test results, and dependency metadata.

- Verification steps that check claims against evidence.

- Quality gates that decide if human review is required.

Our guide on

agent guardrails

goes deeper on why these controls matter in production review pipelines.

Define a review harness with explicit contracts

A harness starts by defining what the AI can and cannot do. That means explicit contracts for inputs, tools, and outputs. We typically suggest four contracts:

Review harness contracts

Input contract

Diff, test status, risk tier, and policy summary are always present.

Tool contract

Search, file read, and test logs are allowed, nothing else.

Output contract

Findings include evidence, severity, and fix guidance.

Escalation contract

High risk changes trigger human review or extra checks.

This is where teams see immediate improvements in signal. The same principle shows up in our

false positive reduction guide

, because consistent inputs make it easier to suppress low value comments.

Build a verification loop, not just a review output

A harnessed agent always verifies. AI review should too. The loop can be simple, but it needs to be explicit. A common pattern is:

- Generate findings based on diff and tests.

- Verify each finding with repository search or file evidence.

- Tag severity and confidence.

Decide routing: auto approve, require human review, or request changes.

When verification is skipped, teams pay the price in missed defects or noisy reviews. The playbook in

our AI code review guide

shows how to operationalize verification in day to day workflows.

Score review quality the same way you score humans

Harnesses are only as good as the metrics that drive them. Instead of counting the number of comments, score outcomes that matter. Our research on

review usefulness

shows why activity metrics miss the point.

| Metric | Why it matters | Target |

|---|---|---|

| Useful findings rate | Percent of AI comments that change the PR | Above 60 percent |

| High severity miss rate | Critical issues missed by AI review | Below 5 percent |

| Time to first review | Minutes from PR open to AI feedback | Below 10 minutes |

For deeper measurement strategies, see our breakdown of

code review metrics

, which maps to the same outcomes used in human review.

Route by risk and keep the harness aligned

Harnesses fail when they treat every PR the same. Risk based routing lets you scale safely. Low risk changes can be fast and automated. High risk changes get deeper analysis and human escalation. Our

AI first development patterns

show how to make this work in production.

We also recommend reducing model overlap where possible. If one model writes and reviews code, blind spots are more likely. Our article on model synchopathy explains why diversified reviewers catch more issues.

Why independent code review performs better than self review

Independent review is better because it reduces correlated mistakes. When the same model family generates and reviews code, both steps share similar priors, shortcuts, and failure patterns. The reviewer may confirm the exact reasoning path that created the bug.

In practice, independent reviewers improve risk capture in three ways:

They challenge assumptions differently, especially around edge cases, null handling, and authorization paths.

They produce less confirmation bias, where a generated implementation is treated as correct simply because it looks internally consistent.

They improve calibration by providing a second confidence signal before merge decisions.

You do not need a complex platform to start. Keep the writer and reviewer separated by model choice, prompt role, and verification tooling. Then route high risk pull requests to the most independent path available. This is where teams usually see the largest reduction in high severity misses.

| Pattern | Typical outcome | Preferred setup |

|---|---|---|

| Same model writes and reviews | Higher agreement, lower defect discovery depth | Use only for low risk PRs |

| Different reviewer model family | Lower agreement, stronger edge case discovery | Default for medium risk PRs |

| Independent model plus policy verifier | Best risk capture and auditability | Required for high risk PRs |

Independence is not only about swapping to a different model. Teams get better results when they separate three levers together: model family, review prompt objective, and verification toolchain. If any one of these stays shared, correlated misses can still slip through.

Model independence: use a different reviewer model family for medium and high risk changes.

Objective independence: force reviewer prompts to challenge assumptions and seek failure evidence, not just style fixes.

Tool independence: run reviewer checks against test logs, policy rules, and dependency signals that the writer path did not use.

Track this with a simple weekly metric split: high severity misses by same-family reviews versus independent reviews. The delta tells you whether independence is improving real risk capture or only adding process overhead.

A reference architecture for harnessed AI code review

If you are designing the harness today, a simple architecture looks like this:

Triage Context build Review Verify Summarize

The Codex harness is open source, which is a useful reference for how tooling and verification can be wired together.

OpenAI Codex harness repository

Where Propel fits in the harness

Propel treats AI code review like a production system. We help teams route by risk tier, tune model selection, and measure review usefulness so leadership can see that AI review is reducing defects without slowing delivery.

If you want a full system blueprint, our guide to

scaling engineering quality

shows the same harness pattern applied across multiple repos.

Frequently Asked Questions

Are one shot agents actually reliable?

They are reliable when the harness is strong. The model alone is not the system. The harness enforces tool access, verification, and escalation rules.

What is the first harness step to add?

Standardize inputs. Make sure every AI review sees the same diff format, risk tier, and test data so results are comparable and repeatable.

How do we keep AI review from slowing teams down?

Use risk based routing. Low risk PRs get quick automated checks, while high risk changes get deeper analysis or human escalation.

Why should the reviewer be independent from the writer?

Independent reviewers reduce shared blind spots and confirmation bias. This usually increases high risk defect detection compared with self review by the same model family.

How do we measure whether AI review is working?

Track review usefulness, high severity misses, and time to first review. These metrics map directly to outcomes leaders care about.

Ready to harness AI code review with higher signal? Propel helps teams build reliable review pipelines with verification loops and risk based routing.

Start free trial →