AI Models

How Knowledge Distillation Works: An Academic Guide for Engineers

Feb 23, 2026

Knowledge distillation is one of the most practical ideas in modern model compression: transfer behavior from a larger teacher model to a smaller student while preserving as much task performance as possible. This article is intentionally academic and implementation focused. It assumes you already understand gradient-based training, cross-entropy, and basic transformer architecture.

Scope and assumptions

Audience: Engineers with AI experience who want a precise training mental model, not a product overview.

Focus: Supervised distillation objectives, representation transfer, regime design, and diagnostics.

Exclusions: Legal/policy analysis and vendor-specific distillation attack narratives.

Key takeaways

Distillation combines a hard-label supervised loss with a soft-target matching loss, usually KL divergence at elevated temperature.

Temperature and capacity gap are first-order controls. If either is wrong, optimization becomes unstable or under-informative.

Modern pipelines rarely rely on logits alone. Hidden-state, attention, and relation losses often improve student sample efficiency.

Offline, online, self-distillation, and teacher-assistant regimes solve different optimization constraints. Pick regime by data and compute budget.

Reproducible evaluation is required: report calibration, latency, and robustness, not just top-1 accuracy.

1. Formal Problem Setup

Let teacher logits be z_t(x) and student logits be z_s(x) for input x. A

canonical objective from Hinton et al. uses temperature-scaled distributions

and combines soft and hard supervision:

p_t^T = softmax(z_t / T)

p_s^T = softmax(z_s / T)

L_KD = T^2 * KL(p_t^T || p_s^T)

L_CE = CE(y, softmax(z_s))

L_total = alpha * L_KD + (1 - alpha) * L_CE

The T^2 factor corrects gradient scale changes induced by temperature.

Without it, large T weakens the KD gradient. In practice, you tune T and

alpha jointly.

2. Why Soft Targets Help Beyond One-Hot Labels

Hard labels provide only the target class. Teacher soft targets encode class similarity structure, often called dark knowledge. For example, if the teacher assigns non-trivial probability mass to semantically nearby classes, the student receives richer geometry than a one-hot vector can provide.

This is why KD can improve optimization even when teacher and student are trained on the same dataset: the student learns relative preference among alternatives, not only binary correctness.

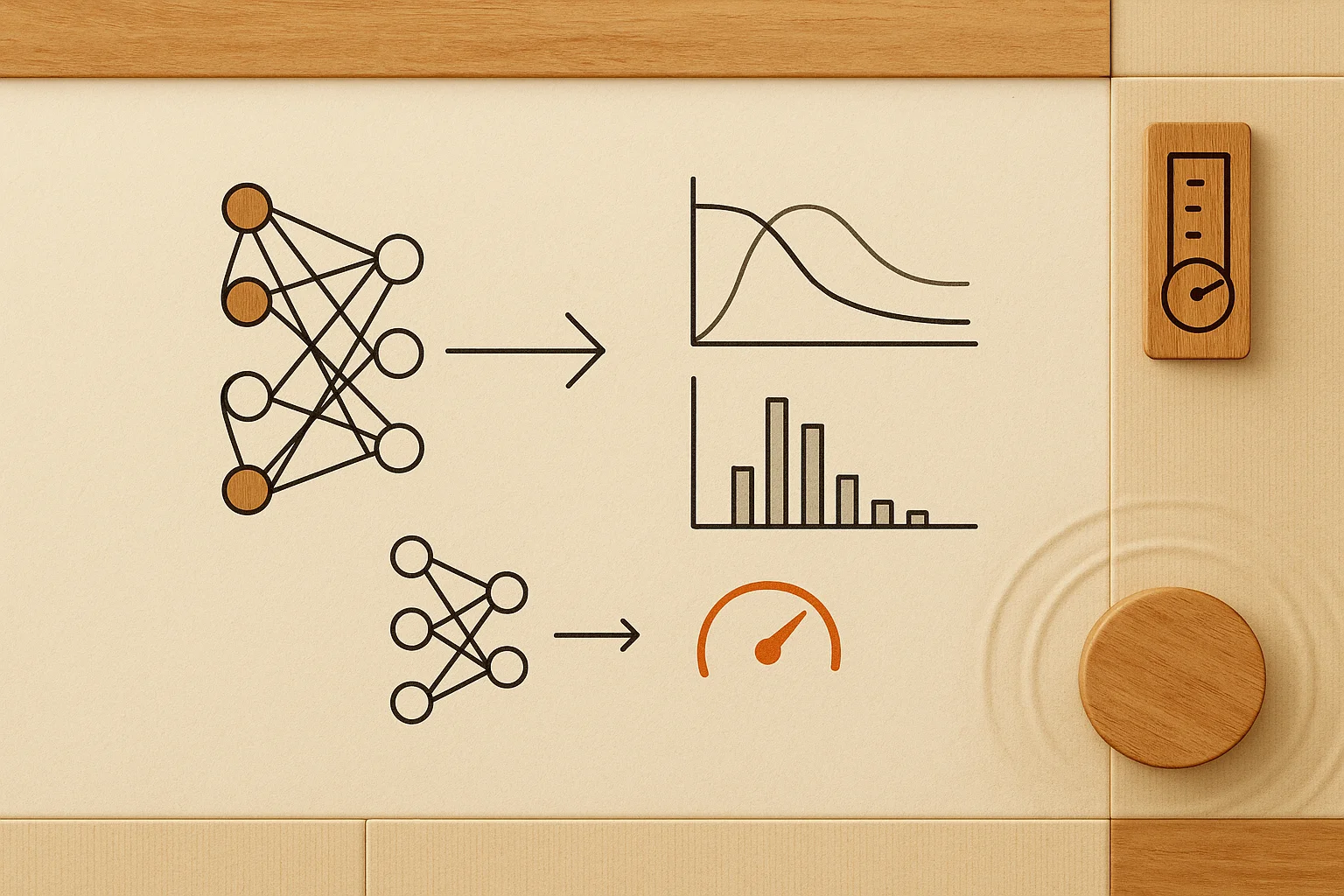

3. Distillation Signal Taxonomy

Distillation methods can be grouped by what is transferred from teacher to student:

Response-based (logits/probabilities)

Match output distributions with KL or CE under temperature scaling. Baseline approach in Hinton et al.

Feature-based (intermediate states)

Match hidden representations at selected layers using L2, cosine, or projection heads. FitNets and TinyBERT use this pattern.

Attention-based (map transfer)

Align attention maps or derived attention statistics. Useful in transformer students with fewer heads/layers.

Relation-based (sample geometry)

Transfer pairwise or triplet relations in representation space. Relational KD focuses on structure between samples, not only pointwise matches.

4. Regime Design: Offline, Online, Self, and Teacher-Assistant KD

Regime selection changes both optimization dynamics and compute cost:

| Regime | Teacher behavior | When it works best |

|---|---|---|

| Offline KD | Frozen pretrained teacher | Most production settings with stable teacher checkpoints |

| Online KD | Teacher and student co-train | Large-budget training where co-evolution may help |

| Self-distillation | Model teaches later versions of itself | Regularization and incremental quality gains |

| Teacher-assistant KD | Intermediate assistant bridges capacity gap | Very small student vs very large teacher |

5. Distillation for Transformers and LLM Pipelines

For transformer students, distillation usually mixes token-level and representation-level constraints:

Token-level KD: match teacher token distribution at each position.

Sequence-level KD: train on teacher-generated sequences to reduce search mismatch between training and inference.

Hidden-state KD: align selected layer outputs using projection heads when dimensions differ.

Attention KD: align attention maps, optionally normalized per head.

DistilBERT and TinyBERT are canonical NLP examples: both combine output and intermediate signal transfer, with TinyBERT emphasizing layer-wise transformer distillation.

6. Minimal PyTorch-Style Training Loop

A compact pattern for response-based KD with hard-label blending:

teacher.eval()

for batch in loader:

x, y = batch

with torch.no_grad():

z_t = teacher(x)

z_s = student(x)

p_t = torch.softmax(z_t / T, dim=-1)

log_p_s = torch.log_softmax(z_s / T, dim=-1)

kd = torch.nn.functional.kl_div(log_p_s, p_t, reduction="batchmean") * (T * T)

ce = torch.nn.functional.cross_entropy(z_s, y)

loss = alpha * kd + (1.0 - alpha) * ce

optimizer.zero_grad()

loss.backward()

optimizer.step()

7. Hyperparameters That Matter Most

Temperature T: common search range is roughly 2-8. Too low behaves like hard labels; too high can flatten useful class structure.

Alpha weighting: tune with capacity in mind. Smaller students often need stronger CE support early, then higher KD weight later.

Layer mapping: for feature KD, map teacher-to-student layers explicitly and use projections for shape mismatch.

Data curation: KD amplifies teacher behavior. If teacher errors are systematic in a slice, students inherit them quickly.

Capacity gap: an extreme gap can make direct KD inefficient. Teacher assistant strategies can stabilize optimization.

8. Common Failure Modes and Diagnostics

Student under-capacity

Symptom: KD loss plateaus high while CE keeps dropping. Response: reduce teacher complexity, add assistant model, or simplify objective.

Teacher calibration issues

Symptom: student confidence inflates without accuracy gain. Response: calibrate teacher or reduce KD weight for high-entropy regions.

Domain shift between KD data and target data

Symptom: strong validation on distillation split, weak production metrics. Response: re-balance distillation corpus and include domain-specific CE anchors.

Optimization instability from objective imbalance

Symptom: loss oscillation and degraded convergence. Response: schedule alpha, warm-up with CE, and control gradient norms on auxiliary KD heads.

9. Evaluation Protocol for Engineers and Researchers

Do not evaluate distillation with a single scalar metric.

Primary task quality: accuracy/F1/exact match on in-domain and stress slices.

- Calibration: ECE or Brier score when confidence quality matters.

Efficiency: latency percentiles, memory footprint, and throughput at target batch size.

Robustness: perturbation and domain-shift checks relative to teacher baseline.

Ablations: isolate logit KD, feature KD, and regime choices independently.

Frequently Asked Questions

When does distillation outperform plain fine-tuning?

Usually when the teacher has materially better generalization and the student has enough capacity to absorb that signal. Distillation then acts as a structured target prior.

Do teacher and student need the same tokenizer?

Not strictly, but mismatch complicates token-level KD. If tokenizers differ, feature- or sequence-level objectives are often easier to stabilize.

Is logits-only distillation enough for transformers?

It can work, but many transformer students improve with intermediate-state and attention transfer, especially when depth differs significantly.

How much distillation data is typically needed?

Enough to cover the operational data manifold. In practice, data quality and slice balance matter more than raw volume once the corpus is reasonably representative.

Further Reading (Primary Sources)

Hinton et al. (2015),

Distilling the Knowledge in a Neural Network

Romero et al. (2014),

FitNets: Hints for Thin Deep Nets

Zagoruyko and Komodakis (2016),

Paying More Attention to Attention

Kim and Rush (2016),

Sequence-Level Knowledge Distillation

Mirzadeh et al. (2019),

Improved Knowledge Distillation via Teacher Assistant

Jiao et al. (2019),

TinyBERT

Sanh et al. (2019),

DistilBERT

Park et al. (2019),

Relational Knowledge Distillation

Gou et al. (2020),

Knowledge Distillation: A Survey